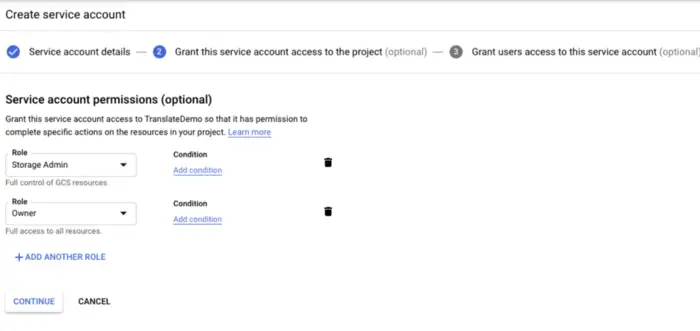

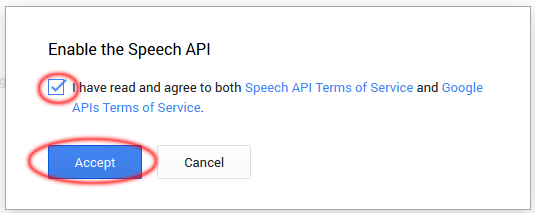

Read content from a local audio file /tmp/audio-content. Then we force a recognition request to Google. If we don't detect any silenceĪnd the audio buffer is about to exceed this value, M, -maxaudio The maximum acceptable duration of speech to triggerĪ recognition request. If we're under this value before issuing a recognition m, -minaudio The minimum acceptable duration of speech to triggerĪ recognition request. This program to transcribe a file that is beingĬontinuously fed with new audio data. e, -eof The maximum number of tries to consider that theĮnd of file (EOF) has been reached. s, -silence Frames with energy under this value will be considered l, -lang Transcription language code, e.g. TTSMaker is a free text-to-speech tool and an online text reader that can convert text to speech, it supports 100+ languages and 100+ voice styles. Present, audio is read from standard input. Usage: node readAndTranscribe.js įinename Audio file to transcribe, a WAV container. Enable the Cloud Speech API for that projectĪnd then set the environment variable GOOGLE_APPLICATION_CREDENTIALS to theįile path of the JSON file that contains your service account key.If you need to get service account, you need to : The GOOGLE_APPLICATION_CREDENTIALS must be properly set and point to a Version 5 and above should work A Google Speech API enabled project wav header information), and synchronized transcription requests are made to Google's server over the nodejs-speech NPM package. The audio file is read in real time (bitrate is taken from the.

This program takes an audio file as an input and uses Google Speech API to output a text transcription. whether it is Whisper-large (which is still not too large with 1.5B parameters).Read wav audio files and get their audio chunks transcribed by Google Speech API in real time. But I did not really find out which Whisper model this is based on, i.e. So maybe the "tiny large" is referring to that. ( ), and that usually would do some dynamic quantization, i.e. That uses ONNX, and the standard conversion is via Hugging Face Optimum for speech recognition, I see that this uses a port of Whisper to the web ( ) based on the Transformers.js library ( ). It would also be nice to add a bit more details on what kind of models we see here, how large they are, etc.

Sometimes this was also referred to as "human language technology (HLT)" ( ), which would also include all those models. "Natural language processing (NLP)" has a different meaning ( ) (compared to "language model", ) and includes speech recognition. So, to put a title here, you could maybe "neural network models in browser" or so. None of the models I see here are language models. You might use a language model in addition here (shallow fusion etc), but it's not necessary (end-to-end models). Language model always means text-only.Į.g, a model for speech recognition is a speech recognition model, not a language model. Although there are also other kind of language models. input is partial text, and output is the the prediction of the following text. Usually we mean an auto-regressive language model, i.e. To obtain your API key, first go to, log in, and then click on API keys in the sidebar. If it's less, we would just call it language model, not large language model.Īnd language model has a very specific meaning: It models text. Large usually means >=7B parameters (2023). So tiny is kind of the opposite of large.

The name "Tiny LLMs" does not make sense.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed